Overview

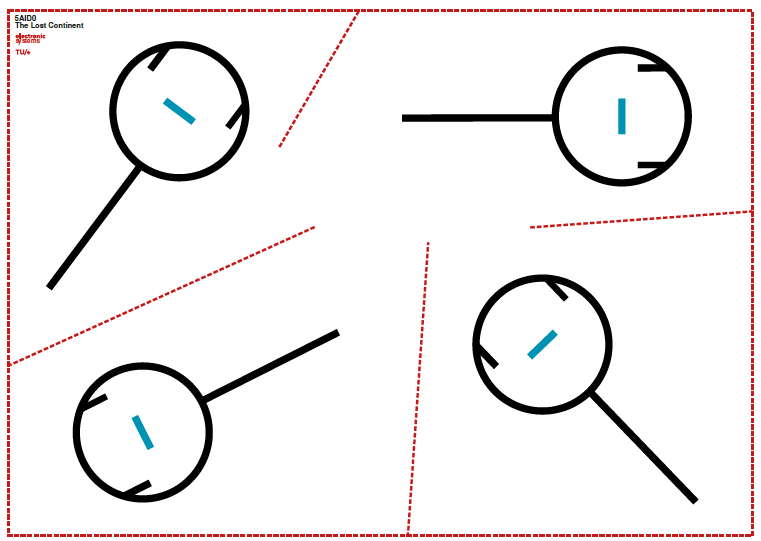

In the Autonomous Vehicles course (5AID0, Q2 2024-2025) at TU Eindhoven, our interdisciplinary team of 6 built a system of three autonomous robots that explore an unknown arena ("The Lost Continent"), locate photographs of historical figures, wirelessly transmit captured images to a base station, and identify the individuals using facial recognition — all without human intervention.

System Architecture

The system integrates four subsystems:

1. Navigation

The robots navigate using a combination of:

- Line-following with infrared sensors for path tracking

- Wall-following with ultrasonic sensors for maze exploration

- State machines to manage transitions between navigation modes

Robots start at arbitrary positions and must autonomously find and follow paths without prior map knowledge.

2. Image Capture

When a robot detects a target (photograph mounted on a stand), it uses an onboard camera to capture the image in YUV422 format.

3. Wireless Communication

Captured images are transmitted from the robots to a base station laptop via XBee/ZigBee wireless modules. The protocol handles:

- Image data packetization and reassembly

- Robot-to-robot coordination to avoid interference

- Error handling for dropped packets

4. Facial Recognition

On the base station, a Python pipeline processes received images:

# Simplified recognition pipeline

import face_recognition

known_encodings = load_known_faces("database/")

captured_image = face_recognition.load_image_file(received_path)

face_locations = face_recognition.face_locations(captured_image)

face_encodings = face_recognition.face_encodings(captured_image, face_locations)

for encoding in face_encodings:

matches = face_recognition.compare_faces(known_encodings, encoding)

# Identify and fetch Wikipedia entryThe system successfully identified multiple historical figures from captured images.

Technical Challenges

- Arbitrary start positions: Robots needed robust recovery behavior to find paths from any starting location

- Image quality over wireless: XBee bandwidth limitations required careful image compression and transmission protocols

- Multi-robot coordination: Preventing collisions and communication interference between three simultaneously operating robots

- Lighting variation: Facial recognition had to work under variable arena lighting conditions

Results & Discussion

This project was a crash course in full-stack robotics — from low-level Arduino sensor reading to high-level Python computer vision. The hardest part was making all subsystems work together reliably. A sensor that works perfectly on the bench can fail unpredictably in an arena with different lighting and surface conditions. The interdisciplinary team (CS, Automotive, ME students) also taught me how different engineering perspectives approach the same problem.

Technologies Used

Arduino (C++), Python (face_recognition, OpenCV), XBee/ZigBee wireless, infrared sensors, ultrasonic sensors, camera modules, state machines